May 3, 2026

Samsara: Notes on an Open-Ended Game Engine

Note: This was co-written by an LLM. These are general ideas around the game engine I've been working on. Will do a proper writeup once the main ideas crystallize. Code will also be shared.

Samsara is an attempt at building an open-ended game engine.

Samsara is low-resolution in many places and concrete enough in others to generate playable games. The current stack will improve with AI capabilities. The important part is the substrate future agents can fill in.

Coding agents are now good enough to port subsystems (like terrain generation), one-shot kernels, set up training loops, and use screenshots to get closer to self-verifying game engines. The substrate increasingly matters as they get stronger. No matter how smart your agent is, if it lacks the necessary grammar of observables, it won’t be able to connect the pieces and do what you want it to do.

Solving more of this problem means automating more of open-endedness research.

Open-Endedness

Games like Minecraft are interesting open-endedness substrates: A small ruleset (genotype) unfolds massive ‘interesting’ possibility spaces (phenotypes). While most of the space is trivial or boring, you can find small rule systems that unfold into ridiculous amounts of structure.1

Machines generate variations quickly, but humans remain essential for steering the process towards interesting configurations, so the human-agent interface becomes paramount.

You encode games by their genotype: world generation/modeling, rules, systems, policies, etc. These are the building blocks that produce interesting phenotypes. An interpolation between two genotypes should decode into a meaningful intermediate phenotype that feels like a proper remix of the two. One direction can turn the game into something more territorial/pvp/fast-paced, while another makes cooperation/pve/strategy more valuable. Operational latent spaces become useful here.2

Samsara

I started with voxels because cubic blocks give games a succinct material language. World generation, player actions, and player-made structures can all live in the same grammar. Minecraft is the obvious reference point: It found a really well-compressed grammar of blocks, terrain, tools, inventories, etc. From that grammar came economies, monuments, servers like 2b2t, and a huge amount of emergence that nobody designed or planned for. Formal system whose consequences exceed the designer’s first-order intentions (because players bring values and memory into it) is endlessly fascinating and goes way beyond ‘games’.

This is achieved with 1. a gpu-resident gameplay simulation, 2. a trajectory and Action system, 3. a self-query system, 4. self play, and 5. an inner-vs-outer loop. Let me get into all of these:

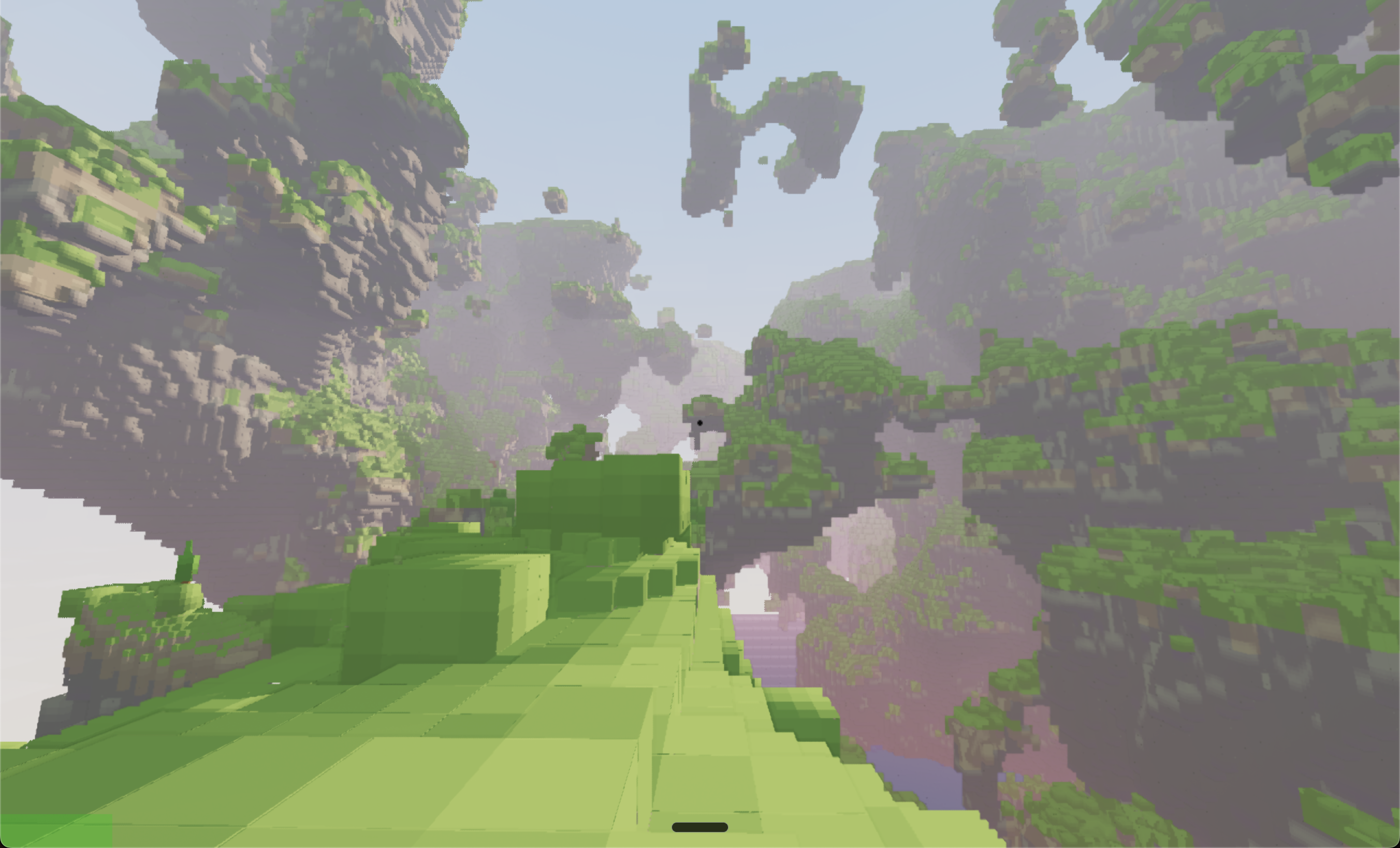

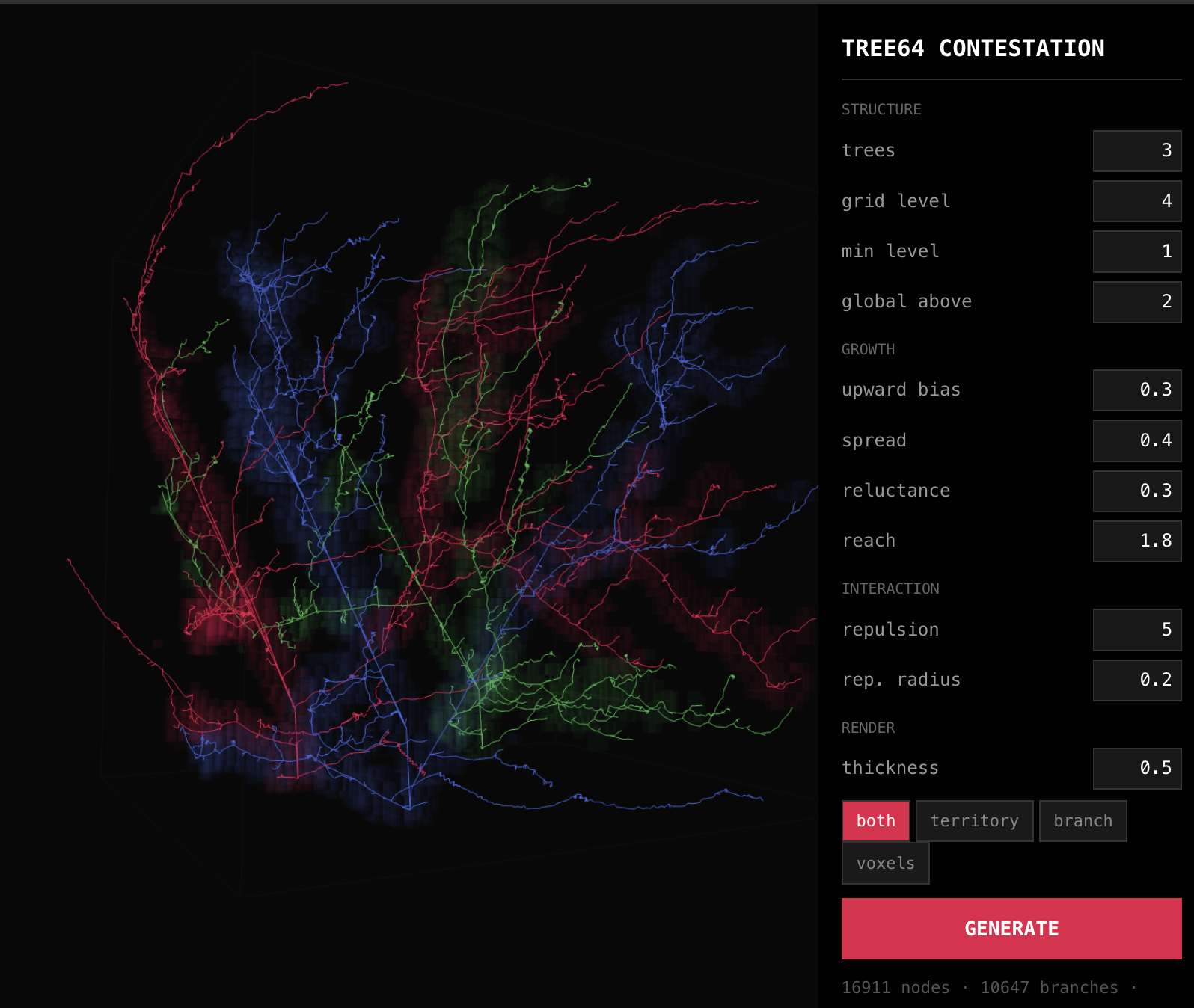

The entire simulation resides on GPU. The renderer is compute-only, using sparse voxel octrees (tree64). The current (third) rewrite is in Python with SlangPy. Python just handles orchestration while Slang owns the GPU work. I expect the implementation to keep changing as languages like Bend (see HVM) improve. The shape of the system remains the more important part: we want worlds that are fast (>10000 fps headless) and deterministic so agents and humans can search them fast enough.

GPU residency matters because searching massive game spaces requires maximum speed. Classic engines keep simulation on the CPU and only use GPU for rendering plus a few accelerators. Good luck doing vectorized training runs with thousands of envs in parallel.

In Samsara, the CPU essentially just handles I/O. One arena can become hundreds of arenas, and one ruleset can be evaluated across many seeds without dragging state through host memory every tick. The Terra port is the same lesson in miniature: world generation gets roughly 200x faster when the work moves out of Minecraft’s Java path and into parallel GPU code.

The engine has a .traj language and a unified Action set for deterministic playback, screenshots, state dumps, and metric queries. The same path runs in a window for a human or headlessly for an agent. You can train policies via self-play, replay fights, run live play, and produce validation artifacts. Recent 512-environment headless runs reach roughly 60,000 actor-control steps/s.

This ‘Trajectory-Driven Development’ allows humans to play the game, record the input stream, and turn it into a replayable .traj file. An agent can run that trajectory headlessly, capture screenshots or query state wherever it wants, and compare the result after a code change. A bug report becomes a causal path through the world. Engine work gets a stable verification loop: replay the same situation, inspect the same moment, and check whether the behavior changed in the intended direction.

The shared Action type supports that loop. Player input, agent input, debug tools, and replay files all go through the same path. The engine can reconstruct what happened, who requested it, what actually occurred, and which consequences followed.

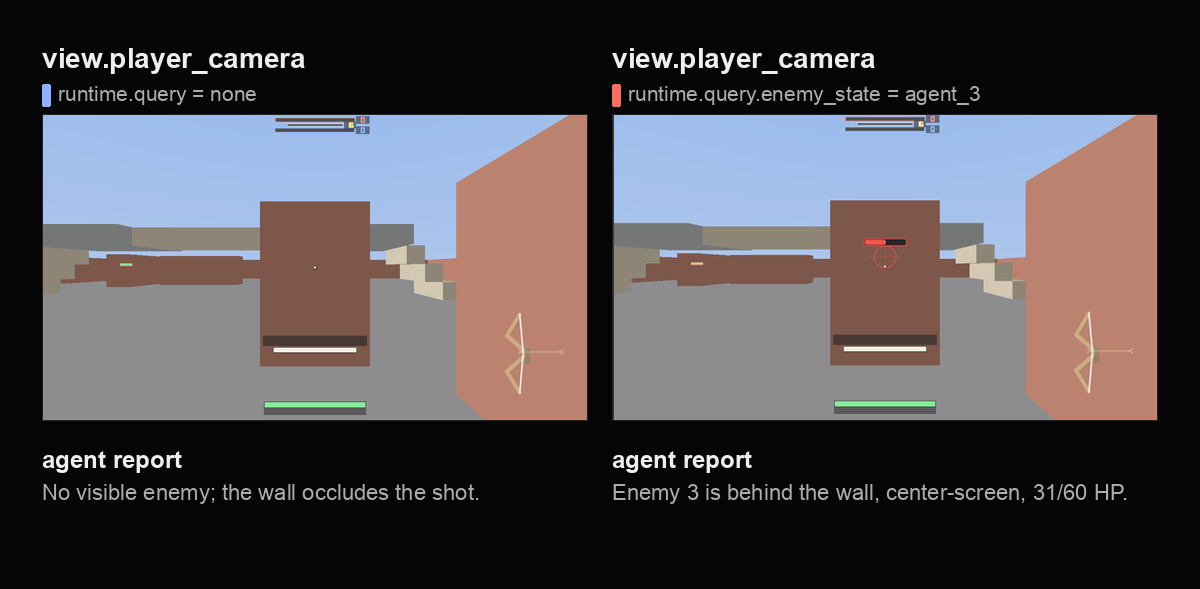

Self-querying is the most important part of making the engine legible to agents, because the engine needs composable data about game state in order to reason effectively about what occurs in games. Did bots rescue allies more reliably? Did a movement change create evasive pressure? Did a terrain change create useful cover? Samsara already has probes for focus fire, blocked shots, rescue attempts, and wall contact. As those probes increasingly expand, composability will become the most important thing.

Battery Arena is the current constrained testbed, which pits basic bots against each other via self-play:

It is a small arena where policies fight with bow-like combat and a battery resource. Positive battery makes attacks stronger, while negative battery makes heals stronger. Shooting enemies drains toward the healing side, while healing teammates charges toward the fighting side. The mechanic tries to create role cycling from the physics of the game. One version is team-based; another lets agents output continuous alignment toward other agents, so teams can emerge from affinity choices.

Different policy populations fight each other, and vary in their reward shaping, architecture, affordances, and whatever other subset of the space it is that you want to iterate over.

The arena is intentionally narrow: In one run I can train policies, replay fights, compare checkpoints, and ask whether focus fire appeared. Then I can change the rules, and see how the game dynamics change compared to the previous run. This is small enough to understand and large enough to allow the engine to make behavior legible before agents can improve it based on higher level human instructions.

The larger pattern is: The game has an inner loop and an outer loop. In the inner loop, players or in-game agents pursue goals under given rules. The outer loop watches what those attempts produce and changes the game shape. For differentiable substrates, we can use gradient descent, and for ones that are discrete, we use evolution. Interestingness obviously cannot be formalized once and then maximized forever, but novelty can still help the outer loop find regions worth showing to a human.

Using Minecraft as a Mockup Engine

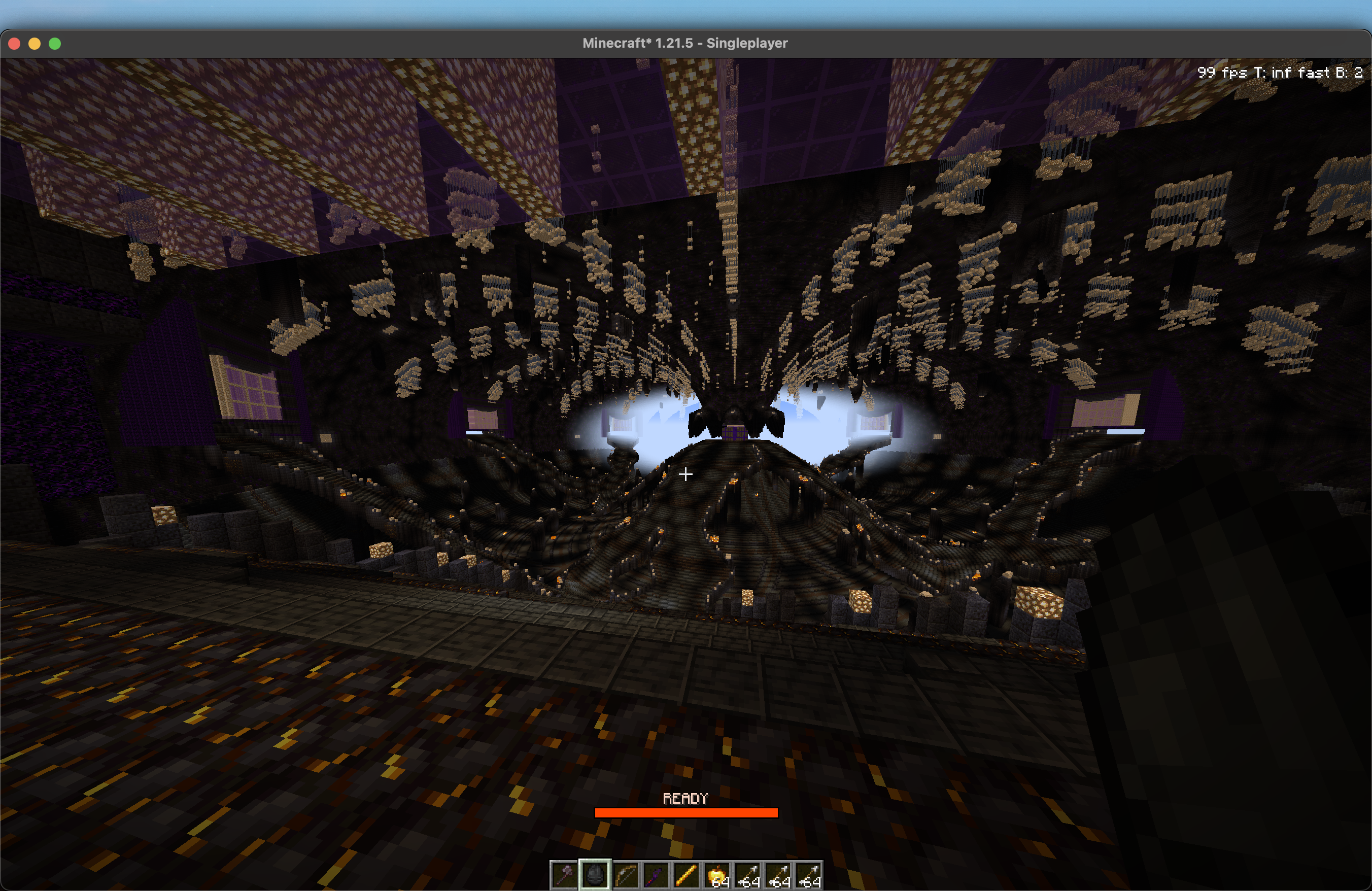

I’ve also been using a Minecraft mod as a nearby proving ground:

Minecraft already has years of accumulated features, a player base, and netcode. Writing mods lets me test movement+combat, multiplayer, and whether a mechanic feels alive. Testing inside that existing reality is much cheaper than rebuilding every primitive first, and later on, coding agents make it trivial to port that logic back into my game engine. Given AI improvement rates, waiting seems fruitful here.

Battery arena, world generation, different game modules/scenes, and the minecraft mockups all work on different levels of friction to solve problems on different layers of the stack. The main challenge will be integrating those modules into a coherent whole. There is an uncomfortable but fun amount of uncertainty in this. A lot of software now feels temporary due to increasing AI capability. The work that still feels worth doing is the scaffolding around the work: rules, observables, trajectories, and feedback loops that let agents search without losing the plot.

The same point holds across software more broadly. Games are a fun instantiation because they’re multimodal, encode complex dynamics, counterfactuals, and are a very demanding type of software. If you manage to set up a ‘harness’ or environment for agents to make interesting games, you’ll have large parts of the toolset to make them produce interesting software overall.

That is the current shape of Samsara. The stack will definitely change in the future, but hopefully we’ll converge enough to diverge forever :)

-

The hard part is finding those systems. Kenneth Stanley’s work around Picbreeder, the myth of the objective, and ASAL are fun resources I recommend. ↩

-

Operational latent spaces are latent spaces where operations inside the space carry semantic meaning after decoding. Interpolation, rotation, or other transformations should produce coherent changes in the generated object, rather than arbitrary noise. ↩